Data-Driven Device Training at the Edge

Engineering Data-Driven Closed-Loop Learning Systems for Edge AI

Even though intelligence is executed locally at the edge, the long-term value of AI systems is realized through a continuous learning loop that connects edge devices with centralized cloud infrastructure. In production environments, this loop is not conceptual, it is a rigorously engineered data pipeline that spans deterministic ingestion, on-device persistence, feature materialization, inference, selective data extraction, and cloud-scale model retraining. The effectiveness of this system depends entirely on how well data is managed across both domains.

Training devices at the edge with real-time data enables systems to learn directly from their operating environment, improving accuracy, adaptability, and responsiveness. Instead of relying solely on pre-trained models from the cloud, edge devices continuously capture live sensor data, process it locally, and refine models based on actual conditions such as usage patterns, environmental changes, and system behavior. This approach reduces latency, preserves privacy, and allows immediate adaptation without constant connectivity. By leveraging structured, high-quality data pipelines on-device, edge systems can evolve from static inference engines into adaptive, self-improving systems that deliver more reliable and context-aware intelligence over time.

For edge device training, these constraints fundamentally shape how learning can occur on-device. Edge systems must train or adapt models within bounded memory, limited compute, strict real-time deadlines, and often without reliable connectivity, while still continuously ingesting high-frequency sensor data such as vibration, current, and temperature.

To make training feasible and effective, raw signals must be deterministically transformed into structured time-series with aligned timestamps, rolling windows, lag features, and aggregates that capture temporal behavior. These features become the foundation for incremental learning, model adaptation, or validation directly on the device. Critically, all data preparation and training-related operations must maintain predictable worst-case execution time (WCET) and avoid latency spikes, ensuring they do not interfere with control loops or safety-critical functions. In this way, edge training is not just about updating models locally, it is about engineering a deterministic, real-time data pipeline that enables safe, reliability, and continuous learning under tight system constraints.

For device training at the edge, persistence is a critical enabler of reliable and continuous learning. Edge systems must not only capture raw signals but also retain derived features, intermediate states, and inference outputs in a power-fail-safe manner so training can resume accurately after interruptions. This requires append-optimized storage, transactional integrity (such as write-ahead logging or copy-on-write), and fast recovery to prevent data loss during resets or power events. By preserving a complete on-device data lineage from sensor to signal to feature to inference and then action, devices can use historical context to refine models, validate behavior, and support incremental or online learning. This persistence ensures that training is not transient or fragile, but instead deterministic, traceable, and compliant, enabling edge devices to evolve safely and intelligently over time.

Transmitting all this data to the cloud is neither practical nor necessary. Bandwidth constraints, cost considerations, and privacy requirements demand selective data movement. Edge systems must intelligently filter and prioritize data for export, such as anomalies, statistical summaries, model drift indicators, or representative samples. This is where efficient encoding, batching, and reliable transport mechanisms become essential, ensuring that only high-value data is transmitted without disrupting real-time operation.

In the cloud, aggregated data from thousands or millions of devices is fused into large-scale datasets that capture diverse operating conditions, edge cases, and environmental variability. This enables advanced training workflows, including supervised learning, unsupervised anomaly detection, and continuous model evaluation.

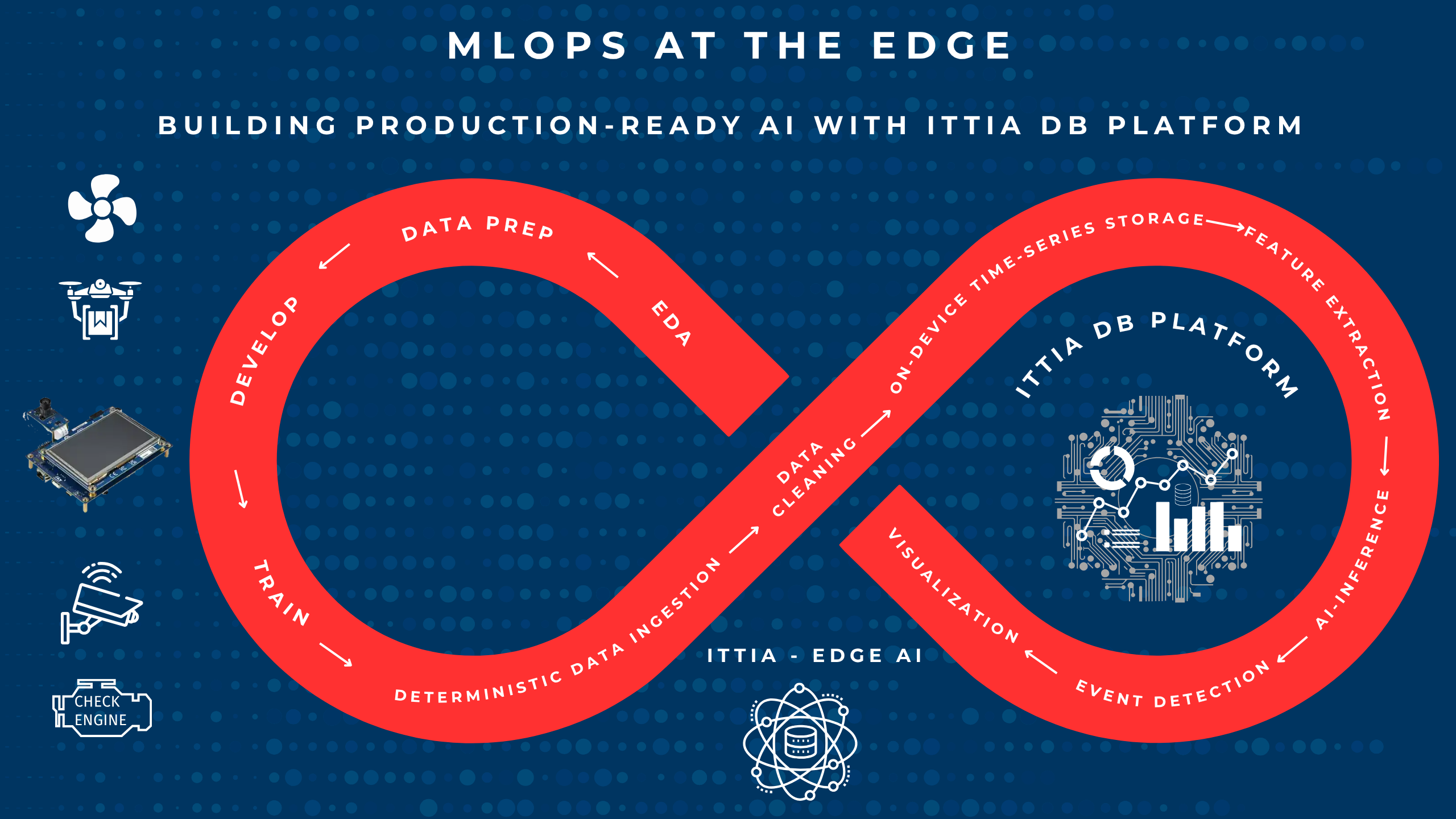

MLOps pipelines leverage this data to retrain models, validate performance across datasets, and optimize for accuracy, robustness, and generalization. Importantly, cloud-side analytics can also identify systemic trends, such as fleet-wide degradation patterns or rare failure modes, that are invisible to individual devices.

Once refined, models are versioned, validated, and deployed back to edge devices through controlled update mechanisms. These updates must be compatible with device constraints (e.g., memory footprint, compute architecture) and often require quantization or conversion (e.g., TensorFlow Lite Micro, CMSIS-NN) for efficient execution. The deployment process must also ensure safety and reliability, with rollback mechanisms and compatibility checks to prevent disruption.

Complete data management across the edge and the cloud is a key enabler of effective device training. At the edge, deterministic data pipelines capture, structure, and persist raw signals, features, and inference results, providing the high-quality, contextual data needed for on-device training, adaptation, or validation. This allows devices to learn from real-time conditions with low latency and without constant connectivity. In parallel, the cloud aggregates data from many devices, creating a richer and more diverse dataset for large-scale model retraining and optimization. Updated models are then deployed back to edge devices, closing the loop. Together, this end-to-cloud data flow ensures that device training is continuous, reliable, and grounded in real-world data, enabling systems to evolve intelligently while maintaining performance, traceability, and consistency.

Device Training Powered by Edge Data

This entire closed-loop system depends on a robust data infrastructure, this is where the ITTIA DB Platform becomes essential for effective device training. ITTIA DB Lite enables deterministic, real-time capture and transformation of sensor data into structured features directly on microcontrollers, providing the foundation for on-device learning and adaptation. ITTIA DB extends this capability to edge processors and gateways, where larger datasets can be managed for more advanced training, validation, and model refinement. ITTIA Analitica delivers visibility into signals, features, and inference outcomes, allowing developers to understand model behavior, validate training results, and improve accuracy over time. Meanwhile, ITTIA Data Connect ensures that the most valuable data is reliably transferred between edge devices and the cloud, enabling large-scale retraining and continuous model updates. Together, this integrated data infrastructure ensures that device training is not only possible at the edge, but also deterministic, traceable, and continuously improving based on real-world data.

- ITTIA DB Lite (MCUs) and ITTIA DB (MPUs) deliver deterministic, in-process data management with bounded resource usage. Their append-optimized, flash-aware storage engines minimize erase/write amplification while maintaining predictable latency, even during background operations such as garbage collection or wear leveling.

- Transactional integrity and power-fail-safe mechanisms (e.g., journaling, copy-on-write) ensure crash consistency and rapid recovery.

- Built-in support for time-series data modeling enables efficient storage of signals, features, and inference results with minimal overhead.

For observability and explainability:

- ITTIA Analitica provides on-device visualization and monitoring of time-series data, feature evolution, and model outputs. Engineers can inspect system behavior in real time, validate feature engineering pipelines, and detect drift or anomalies directly on the device, without relying solely on cloud tools.

For data movement:

- ITTIA Data Connect enables selective, reliable data distribution between MCU↔MPU tiers and from device to cloud. It supports filtering, aggregation, and prioritization of data streams, ensuring that only meaningful insights are transmitted while preserving bandwidth and maintaining system determinism.

Data Cleaning

Data cleaning is critical for device training at the edge because the quality of learning is directly determined by the quality of input data. Edge devices often operate in noisy, unpredictable environments where sensor signals can include outliers, missing values, drift, or inconsistencies. Without proper cleaning, such as filtering noise, clamping anomalies, normalizing ranges, and aligning timestamps, these imperfections propagate into features and ultimately degrade model accuracy and stability. For on-device training and adaptation, this is even more important, as models continuously learn from live data and can quickly reinforce errors if the input is not reliable. By ensuring that data is clean, consistent, and contextually structured before training, edge systems can produce more accurate, stable, and explainable models, enabling safe and effective learning in real-world conditions.

The ITTIA DB Platform provides a powerful foundation for data cleaning directly on edge devices, enabling reliable and effective training in real-world conditions. With ITTIA DB Lite on microcontrollers and ITTIA DB on higher-performance edge processors, raw sensor data can be deterministically ingested, aligned, and transformed into clean, structured time-series. The platform supports on-device data preparation techniques such as filtering noise, clamping outliers, handling missing values, normalizing ranges, and generating sliding windows and derived features—all in real time and with predictable latency. By ensuring that only high-quality, consistent data is used for training and inference, the ITTIA DB Platform prevents error propagation, improves model accuracy, and maintains stability during continuous learning. This enables edge devices to train and adapt confidently, with full data lineage and traceability to support explainability, validation, and compliance.

Conclusion

By integrating these components, the ITTIA DB Platform transforms edge devices into self-contained data systems that directly enable effective device training. At the edge, devices continuously capture, structure, and persist real-time data, generating high-quality features and maintaining full data lineage to support on-device learning, adaptation, and validation with deterministic guarantees. In parallel, the cloud aggregates data from many devices to perform large-scale training, refine models, and distribute improvements back to the fleet. This creates a true closed-loop training architecture: the edge provides immediacy, reliability, and context-rich data for local learning, while the cloud delivers scale, deeper model optimization, and coordinated evolution. Data is the bridge between them, ensuring that training is continuous, traceable, and grounded in real-world conditions. In this model, device training is not a one-time event, it is an ongoing, adaptive process powered by a resilient, end-to-cloud data pipeline.