MLOps at the Edge

Building Production-Ready AI with ITTIA DB Platform

Data is the foundation of Edge AI because it directly determines how accurate, reliable, and useful intelligence can be on a device. Unlike cloud-based systems, edge environments must operate with limited resources, intermittent connectivity, and real-time constraints, making high-quality, well-structured data essential. It is not enough to run an AI model; the system must continuously capture sensor signals, organize time-series data, clean and transform it into meaningful features, and preserve it safely for ongoing learning and validation. Without this disciplined data pipeline, models become fragile, unexplainable, and prone to failure in real-world conditions. By managing data deterministically with the right technology at the edge, ensuring consistency, traceability, and power-fail safety, systems can evolve from simple inference engines into intelligent, self-observing devices capable of adapting, explaining decisions, and operating reliably in production environments.

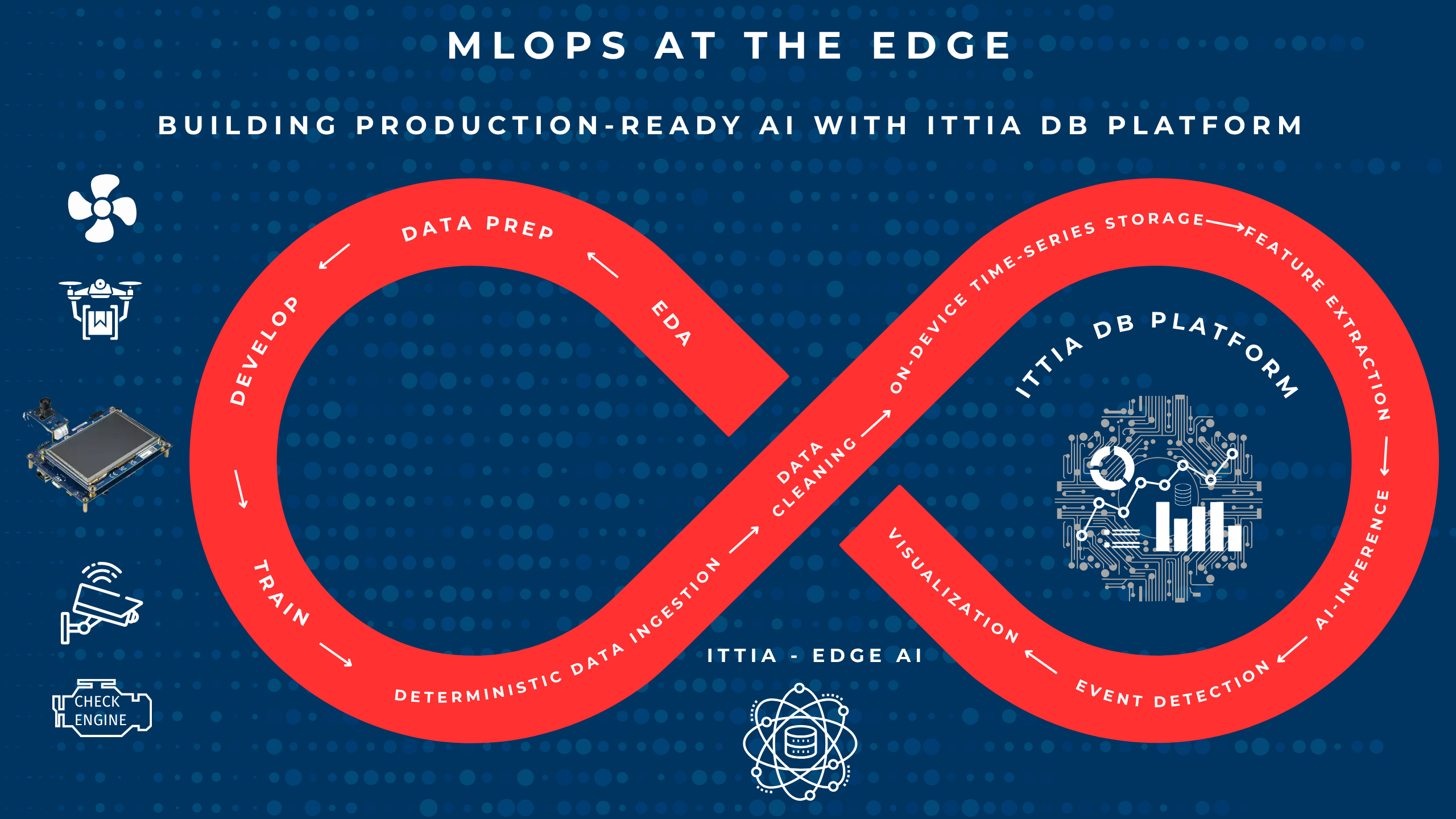

As artificial intelligence moves from cloud environments into embedded and edge devices, the concept of MLOps must evolve. MLOps (Machine Learning Operations) is the discipline of managing the full lifecycle of machine learning systems so they can run reliably in real-world environments. It combines data management, model development, deployment, monitoring, and continuous improvement into a structured, repeatable process. Instead of treating AI models as one-time experiments, MLOps ensures they remain accurate, scalable, and maintainable over time by continuously incorporating new data and feedback. In essence, MLOps transforms machine learning from isolated models into production-ready systems that deliver consistent, evolving intelligence.

Traditional MLOps pipelines focus on centralized data, scalable compute, and cloud-based model lifecycle management. However, Edge AI introduces a fundamentally different challenge: how to manage data, models, and outcomes directly on constrained, distributed devices. This is where the ITTIA DB Platform becomes a critical enabler, bringing MLOps capabilities to the edge with a strong focus on deterministic data management, observability, and lifecycle control.

The Shift from Cloud MLOps to Edge MLOps

In cloud environments, data is abundant, connectivity is assumed, and resources are elastic. At the edge, none of these assumptions hold. Devices must operate with limited memory and storage, intermittent connectivity, and strict real-time constraints. Yet they are expected to perform continuous data ingestion, feature extraction, inference, and decision-making.

Edge MLOps is therefore not just about deploying models, it is about managing the full lifecycle of data and intelligence on-device:

- Capturing high-frequency sensor data reliably

- Structuring and cleaning time-series data

- Preparing features for AI models

- Monitoring model performance and drift

- Maintaining explainability and traceability

- Enabling controlled data export for retraining

Without a robust data infrastructure, these tasks become fragmented and unreliable. ITTIA DB Platform provides a unified solution to address these challenges.

Data as the Foundation of Edge MLOps

At the core of any MLOps pipeline is data. The ITTIA DB Platform ensures that data is captured, stored, and managed in a deterministic, power-fail-safe, and flash-aware manner, even on resource-constrained devices.

With ITTIA DB Lite, developers can build reliable data pipelines on microcontrollers, ensuring bounded latency and consistent behavior. For more capable systems, ITTIA DB provides extended capabilities for complex queries and data processing.

This unified approach eliminates the need for fragmented buffers and file-based logging, replacing them with structured, queryable datasets that form the backbone of Edge AI systems.

On-Device Feature Engineering and Inference

One of the defining aspects of Edge MLOps is the ability to prepare and process data locally. ITTIA DB Platform supports time-series operations such as filtering, aggregation, lag features, and rolling window analysis directly on the device. This enables real-time feature extraction, ensuring that AI models receive high-quality, context-rich inputs.

By keeping data processing close to the source, developers can reduce latency, improve responsiveness, and maintain system autonomy, even in environments with limited or no connectivity.

Observability and Explainability at the Edge

In production systems, understanding how AI models behave is essential. ITTIA DB Platform enables full observability of the data pipeline, allowing developers to monitor sensor data, feature transformations, and inference outcomes in real time.

With ITTIA Analitica, teams can query, visualize, and analyze data directly on the device, gaining insights into system behavior without relying on external tools. This capability is critical for debugging, tuning, and validating AI models in real-world conditions.

Equally important is explainability. ITTIA DB Platform maintains a complete lineage of data from sensor to signal to feature to inference and then action for enabling engineers to trace decisions back to their origins. This is essential for regulatory compliance, safety validation, and building trust in AI systems.

Secure and Controlled Data Movement

While Edge AI emphasizes local processing, cloud connectivity still plays a role in fleet-wide optimization and model retraining. ITTIA DB Platform enables controlled, secure data distribution through ITTIA Data Connect, ensuring that only relevant, curated datasets are transmitted.

This approach reduces bandwidth usage, enhances data privacy, and allows organizations to maintain control over their data pipelines while still benefiting from centralized analytics and continuous improvement.

Continuous Improvement and Lifecycle Management

Edge MLOps is not a one-time deployment, it is a continuous process of monitoring, learning, and improving. ITTIA DB Platform supports this lifecycle by enabling:

- Persistent storage of inference results and performance metrics

- Detection of anomalies and model drift

- Feedback loops for model refinement

- Seamless integration with cloud-based retraining workflows

By maintaining structured, historical datasets on-device, developers can build systems that learn over time and adapt to changing conditions.

Enabling Production-Grade Edge AI

The combination of deterministic data management, on-device processing, observability, and secure data movement makes ITTIA DB Platform a foundational technology for Edge MLOps. It allows developers to move beyond prototypes and build production-grade AI systems that are reliable, explainable, and scalable.

In industries such as automotive, industrial automation, energy, and medical devices, where reliability and safety are paramount, this capability is not optional, it is essential.

Conclusion

MLOps at the edge require a new mindset, one that treats data as a first-class citizen and prioritizes reliability, determinism, and explainability. The ITTIA DB Platform brings these principles to life, enabling developers to manage the full lifecycle of Edge AI directly on embedded devices.

In the era of distributed intelligence, success is no longer defined by the power of models alone, but by the strength of the data infrastructure that supports them. ITTIA DB Platform provides that foundation, turning Edge AI into a scalable, trustworthy, and production-ready reality.