Where STM32 Meets Edge AI: Essential Data Management for Intelligent Devices

Accelerating Edge AI with Ease

As Edge AI moves from experimentation to production, developers face a familiar challenge: AI models are only as good as the data pipelines that support them. On STM32 devices, where resources, power, and real-time behavior matter, building reliable data management, processing, and AI enablement from scratch quickly becomes complex and error-prone.

AI data management and processing on microcontrollers (MCUs) is essential for making Edge AI reliable, efficient, and deployable at scale. Because MCU devices operate under tight constraints in memory, power, and real-time behavior, AI workloads must be supported by deterministic, lightweight data pipelines that safely capture, clean, and organize sensor data directly on the device. Effective MCU-level data management enables on-device filtering, feature extraction, and historical context for inference, reducing latency, preserving bandwidth, and eliminating dependence on constant cloud connectivity. By ensuring data integrity, predictability, and traceability, robust AI data management on MCUs transforms raw signals into trustworthy intelligence that can operate continuously in real-world, resource-constrained environments.

Integrating the ITTIA DB Platform with STM32 devices fundamentally changes this equation, delivering both ease of development and production-grade robustness for Edge AI systems.

Simplifying Development on STM32

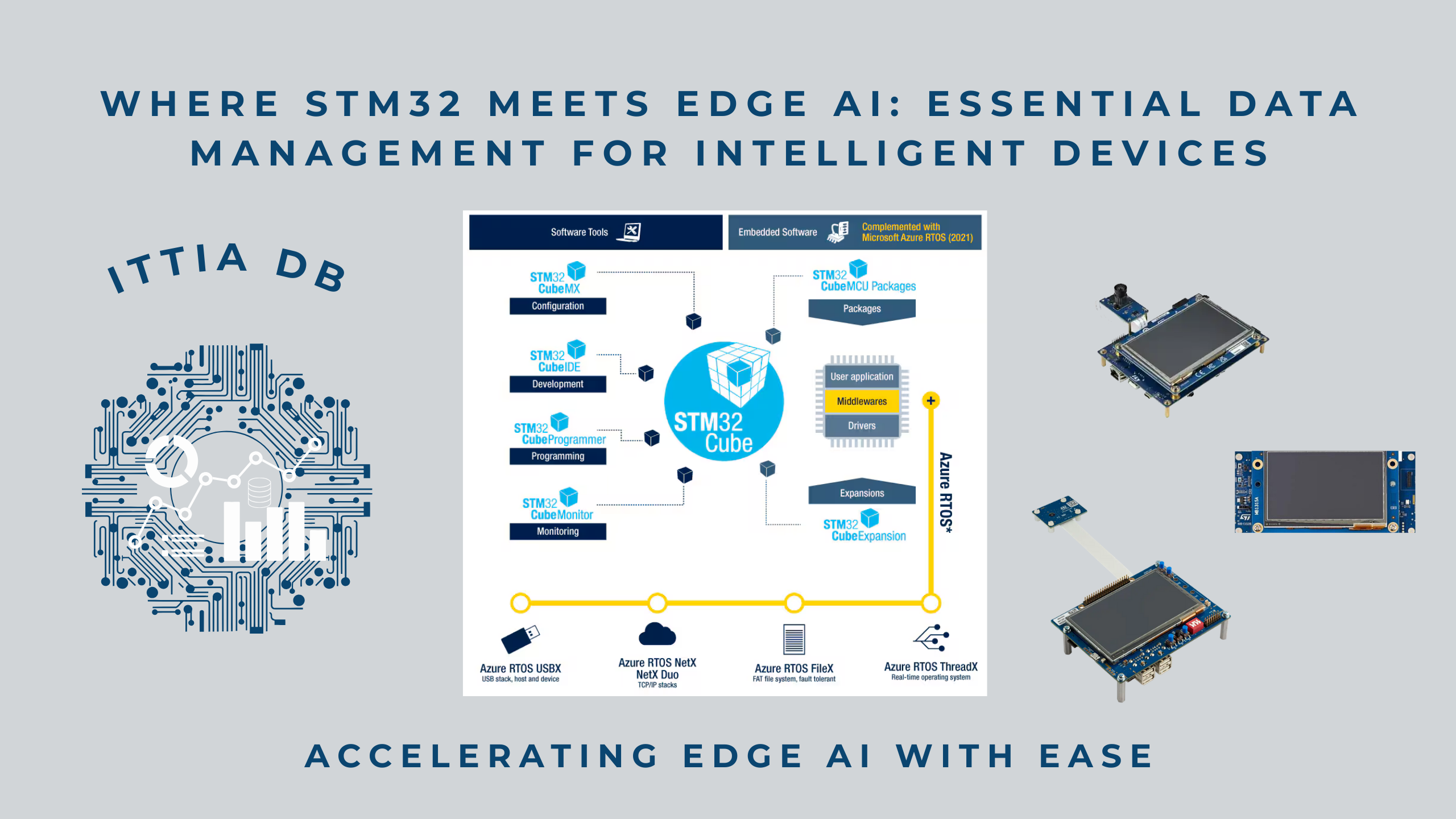

STM32 microcontrollers are widely adopted for their performance, low power consumption, and rich ecosystem. However, Edge AI applications often require developers to handcraft data pipelines using files, ring buffers, or custom logging code, introducing fragility and long debug cycles. The ITTIA DB Platform removes this burden by providing a ready-made, deterministic data foundation that integrates cleanly with STM32 and popular RTOS environments.

Ease of use is a critical factor in embedded systems development because it directly translates into saved time, lower cost, and fewer engineering resources. When data management, processing, and integration are intuitive and well-designed, teams spend less time writing and debugging custom infrastructure code and more time delivering real product features. Simple APIs, predictable behavior, and ready-made building blocks reduce trial-and-error, shorten learning curves, and minimize integration risk, allowing embedded teams to quickly move from prototype to production with greater confidence and efficiency.

Instead of spending engineering time writing and maintaining custom code for data persistence, recovery, filtering, and querying, developers can rely on well-defined, production-ready APIs to safely manage time-series and event data. This approach dramatically accelerates prototyping and system bring-up, results in cleaner and more maintainable firmware, minimizes edge cases and late-stage surprises, and shortens the path from an initial demo to a robust, deployable product.

Deterministic Data Management for Edge AI

Determinism is essential for AI systems, especially in embedded and edge environments, because AI decisions must be predictable, repeatable, and trustworthy, not just accurate. Without deterministic behavior in data ingestion, processing, and execution timing, AI models can produce inconsistent results, miss critical events, or interfere with real-time system operation. Deterministic data pipelines ensure that AI receives the same clean, time-aligned inputs under the same conditions, enabling stable inference, reliable validation, and explainable behavior. For safety-critical and production systems, determinism transforms AI from an experimental feature into a dependable component that engineers can certify, debug, and deploy with confidence.

Edge AI on STM32 often runs alongside control loops, communications stacks, and power-management tasks. The ITTIA DB Platform is designed for these constraints, offering deterministic performance, bounded resource usage, and power-fail safety. Data ingestion, queries, and updates complete within predictable time bounds, ensuring AI workloads never interfere with real-time system behavior.

This determinism is critical for Edge AI systems that must remain reliable under power cycling, noise, and harsh operating conditions, while still capturing and processing meaningful data.

Built-In Data Processing and Cleaning

AI does not start with models, it starts with clean, well-structured data. Edge AI data processing and cleaning are essential for turning raw sensor streams into reliable, actionable intelligence at the point where decisions are made. By filtering noise, handling missing data, enforcing physical limits, and extracting meaningful features directly on the device, edge systems ensure AI models receive stable, high-quality inputs in real time. Performing these steps locally reduces latency, preserves bandwidth, and eliminates dependence on constant cloud connectivity, while deterministic processing guarantees predictable behavior in resource-constrained and safety-critical environments. Together, on-device data processing and cleaning enable Edge AI systems to deliver accurate, explainable, and production-ready intelligence in the real world.

The ITTIA DB Platform provides database-level data processing capabilities such as clamping, lag functions, filtering, windowing, normalization, and gap handling. These features allow developers to prepare AI-ready data directly on STM32 devices, eliminating large portions of fragile preprocessing code.

As a result, Edge AI models receive stable, consistent inputs, improving accuracy, reducing false positives, and making behavior easier to explain and validate.

Enabling Edge AI Without Cloud Dependency

Edge AI data processing and management deliver significant advantages over cloud-centric approaches by bringing intelligence closer to where data is generated. Processing and managing data on the device dramatically reduces latency, enabling real-time decisions that are impossible when data must be sent to the cloud. It also lowers bandwidth and connectivity costs by transmitting only meaningful insights instead of raw data, while continuing to operate reliably even when networks are unavailable. By keeping sensitive data local, edge-based data management improves security and privacy, and deterministic on-device processing ensures predictable behavior for industrial, automotive, and safety-critical systems, benefits that a cloud-only architecture cannot provide.

By managing and processing data on-device, STM32 systems integrated with the ITTIA DB Platform can run analytics and AI exactly where data is generated. This reduces latency, preserves bandwidth, and ensures systems continue to operate intelligently even when connectivity is limited or unavailable.

Developers can store AI inference results, confidence scores, and historical context locally, enabling smarter decisions, adaptive behavior, and continuous improvement over the device’s lifetime.

A Scalable Path from Prototype to Product

A key advantage of combining the ITTIA DB Platform with STM32 devices is the ability to follow a scalable path from prototype to production without re-architecting the system. The same deterministic data management, processing, and analytics used during early prototyping seamlessly scale to deployed products, long-term field operation, and future feature expansion. This consistency reduces integration risk, simplifies validation and certification, and allows teams to iterate quickly while maintaining production-grade reliability. With ITTIA DB Platform and STM32, engineers can confidently evolve edge AI solutions from proof-of-concept to robust, maintainable products built for real-world deployment.

One of the biggest advantages of integrating the ITTIA DB Platform with STM32 is the smooth transition from prototype to production. The same data architecture used in early development scales to deployed products, long-term field operation, and future feature expansion, without rewriting core infrastructure.

For teams building Edge AI on STM32, this means:

- Lower development risk

- Faster time to market

- Easier maintenance and certification

- Confidence that systems will scale and evolve

Conclusion

Integrating the ITTIA DB Platform with STM32 devices creates a complete, production-grade foundation for Edge AI, one that is faster to develop, easier to maintain, and far more reliable to deploy at scale. By combining deterministic data management, built-in data cleaning and processing, and AI-ready data pipelines, the ITTIA DB Platform eliminates the need for fragile custom infrastructure and significantly reduces development risk. This allows engineering teams to focus on building differentiated functionality rather than reinventing data plumbing. The result is an Edge AI architecture that scales smoothly from prototype to product, operates predictably in real-world conditions, and transforms STM32-based systems into intelligent, resilient, and software-defined devices that continuously observe, learn, and improve throughout their lifecycle.