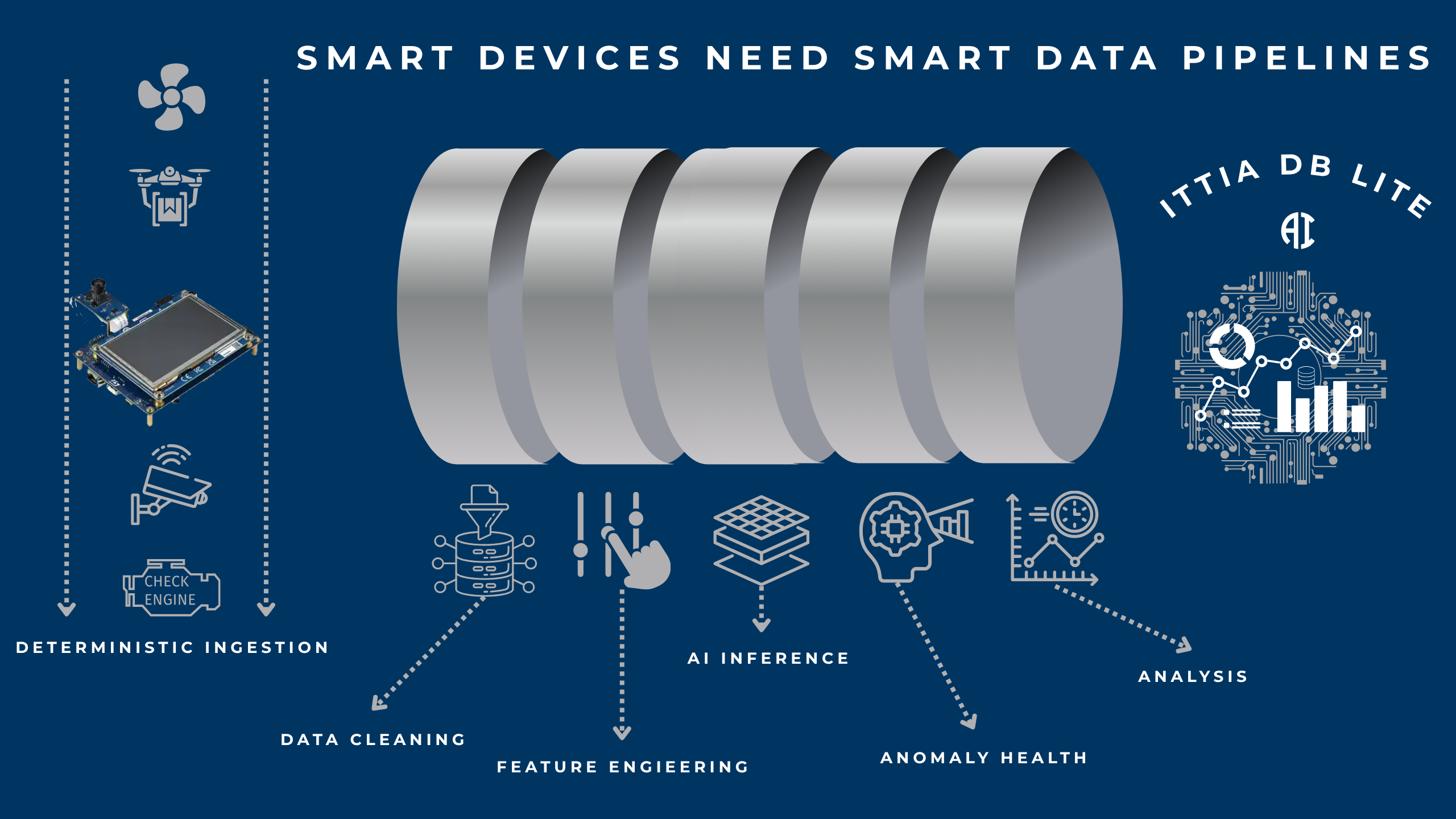

Smart Devices Need Smart Data Pipelines

Building a Deterministic Edge AI Pipeline with ITTIA DB Lite AI

Edge AI is no longer about squeezing a model onto a device, it’s about building a reliable, real-time data pipeline that consistently turns raw signals into trustworthy decisions. Models are only as good as the data they receive, and at the edge, that data must be captured, structured, processed, and acted upon deterministically.

Data pipelines for the edge refer to the structured, end-to-end flow of data within a device, from capturing raw sensor signals to generating real-time insights and actions locally. This pipeline includes deterministic data ingestion, reliable time-series storage, feature engineering, and on-device AI inference, all operating within the constraints of embedded systems.

Unlike cloud-based pipelines, edge pipelines must ensure bounded latency, power-fail safety, and consistent behavior even without connectivity. By transforming raw data into actionable intelligence directly on the device, edge data pipelines enable faster decisions, improved reliability, and true real-time operation in applications such as industrial automation, automotive systems, medical devices, and smart IoT solutions.

Building data pipelines at the edge is challenging because systems must operate under strict resource constraints (limited CPU, memory, and storage) while still guaranteeing deterministic, real-time behavior. Data arrives from multiple sensors at high frequency and often through ISR/DMA bursts, requiring bounded-latency ingestion without blocking critical tasks. Storage is complicated by flash characteristics such as erase-before-write, wear limits, and unpredictable latency spikes (especially with NAND or SD), which can break real-time guarantees.

Ensuring power-fail safety and crash consistency is essential, as devices may lose power at any moment and must recover quickly without data corruption. In addition, edge pipelines must perform on-device data cleaning and feature engineering (e.g., time alignment, rolling windows, outlier handling) to support AI inference without relying on the cloud.

Connectivity is intermittent, so systems must function autonomously while selectively syncing meaningful data upstream. Finally, validating these pipelines requires proving worst-case execution time, eliminating latency outliers, and maintaining explainable, trustworthy data lineage from sensor to decision, all of which make edge data pipeline design significantly more complex than traditional cloud-based approaches.

This is where ITTIA DB Lite AI becomes essential. It provides the data infrastructure layer that transforms embedded devices into intelligent systems, without sacrificing real-time guarantees, reliability, or power-fail safety.

Why Data Pipelines Matter More Than Models

In many edge deployments, failures are not caused by AI models, they stem from the data pipeline itself: unstructured or inconsistent sensor inputs, latency spikes during storage or retrieval, data loss during power interruptions, and the inability to reproduce or explain decisions. A true Edge AI system must therefore guarantee deterministic ingestion with no dropped or delayed samples, maintain precise time alignment across multiple sensors, enable reliable and consistent feature generation, and deliver bounded latency from input to decision. Without these foundations, AI behavior becomes unpredictable, and in real-world systems, unpredictability is failure.

The Edge AI Pipeline with ITTIA DB Lite AI

1) Signal Capture (Deterministic Data Acquisition)

A production-grade Edge AI pipeline begins with a structured and deterministic data acquisition layer, where devices continuously capture critical signals such as vibration, current, temperature, and pressure. At this stage, ITTIA DB Lite AI ensures ISR/DMA-safe ingestion, append-optimized writes, and fully non-blocking operations with no unpredictable delays. This guarantees that even under high-frequency sampling and burst conditions, data is captured reliably and consistently, resulting in zero data gaps, no timing drift, and a solid foundation for downstream analytics and AI inference.

In addition, a production-grade Edge AI pipeline extends through visualization and connectivity to deliver complete, actionable intelligence. Devices continuously capture signals such as vibration, current, temperature, and pressure, where ITTIA DB Lite AI ensures eliminating data gaps and timing drift at the source.

Building on this foundation, ITTIA Analitica transforms raw and processed data into real-time, on-device dashboards, enabling engineers and operators to visualize trends, monitor anomaly scores, track feature evolution, and understand system behavior with full data lineage and explainability.

At the same time, ITTIA Data Connect enables secure, selective data movement from device to gateway or cloud, ensuring that only meaningful insights, such as anomalies, summaries, or key performance metrics, are transmitted, even under intermittent connectivity. Together, these components create a seamless pipeline from sensor to insight to action, where data is reliably captured, intelligently processed, clearly visualized, and efficiently shared, without compromising determinism or real-time performance.

2) Time-Series Data Management (Structured Storage)

Time-series data management is the structured capture, storage, and processing of data points indexed by time, enabling devices to track how signals such as vibration, temperature, or current evolve over time. At the edge, it provides critical benefits by ensuring deterministic, ordered data ingestion, efficient storage optimized for continuous streams, and fast access to recent and historical data for real-time analytics and AI. This allows edge systems to perform accurate feature generation, detect anomalies, and make reliable decisions locally, without dependence on the cloud.

Meanwhile, raw data is stored as ordered, timestamped time-series, forming a consistent and queryable history of device behavior over time. ITTIA DB Lite AI enables this with flash-aware storage that avoids random write penalties, pre-allocated memory structures that ensure predictable and bounded resource usage, and crash-safe persistence mechanisms that guarantee no data corruption even during unexpected power loss. The result is a continuously reliable and accessible historical dataset, ready for real-time queries, feature generation, and trustworthy AI-driven decisions at the edge.

3) Feature Engineering at the Edge (Where Intelligence Begins)

Feature engineering at the edge is the process of transforming raw sensor data into structured, meaningful inputs (features) directly on the device to enable real-time analytics and AI inference. Instead of sending raw signals to the cloud, the edge system computes features such as rolling averages, min/max values, frequency components, deltas, lag values, and anomaly scores in a deterministic and resource-efficient manner.

This ensures that data is cleaned, time-aligned, and optimized for model input with bounded latency and predictable behavior. The result is faster, more reliable decision-making on-device, reduced bandwidth usage, improved privacy, and the ability to operate independently even with limited or no connectivity.

At the device edge, raw signals are transformed into AI-ready features such as rolling averages, RMS/FFT components, lag features, delta changes, and outlier-controlled values using techniques like CLAMP.

ITTIA DB Lite AI enables this process through efficient time-window operations (sliding and rolling windows), allowing feature extraction to be performed directly on stored time-series data without unnecessary data movement. With deterministic execution and bounded compute time, the system ensures that feature generation remains predictable and consistent under all conditions, resulting in reliable, repeatable inputs for accurate on-device AI inference.

4) On-Device AI Inference (Real-Time Decisions)

On-device AI inference is the process of running trained machine learning models directly on an embedded device, such as an MCU, MPU, or edge system, using locally available data, without relying on cloud connectivity. Instead of sending raw data to external servers, the device performs real-time predictions based on features generated from its own sensors, enabling immediate decision-making with minimal latency.

This approach improves reliability, as the system can operate even with no or intermittent connectivity, while also enhancing privacy and reducing bandwidth usage. At the edge, on-device inference must be tightly integrated with deterministic data pipelines to ensure consistent, explainable, and time-bounded responses for critical applications.

The processed features are fed into embedded AI models to perform tasks such as anomaly detection, classification, and Remaining Useful Life (RUL) estimation, enabling devices to interpret behavior and predict outcomes in real time. A critical requirement at the edge is that inference must execute within strict, bounded timing constraints to support reliable system operation.

With ITTIA DB Lite AI, data delivery to models is fully deterministic, eliminating latency spikes caused by storage or data access, while feature pipelines remain stable even under heavy load or continuous streaming conditions. The result is consistent, real-time intelligence delivered directly on the device, without dependence on cloud connectivity and without compromising predictability or system safety.

5) Visualization & Explainability (Trust the System)

Visualization and explainability at the device level provide immediate insight into how data is being processed and how decisions are made, directly where those decisions occur.

By presenting real-time dashboards, trends, anomaly scores, and feature behavior on the device, engineers and operators can quickly understand system health, validate model performance, and trace outcomes back to the underlying data. This is especially critical in edge environments where connectivity may be limited and decisions must be trusted without cloud validation. Explainability ensures that every inference can be linked to its data lineage, from sensor to feature to model output, enabling debugging, regulatory compliance, and continuous improvement. The result is greater transparency, faster issue resolution, and increased confidence in deploying AI systems in safety-critical and real-time applications.

Edge AI systems must be able to explain their decisions, particularly in critical domains such as automotive (ECUs and SDVs), medical devices, and industrial automation, where trust, safety, and compliance are essential.

By integrating with ITTIA Analitica, developers and operators can visualize real-time trends and anomalies, track how features evolve over time, and validate model behavior directly on the device. This level of visibility ensures that every decision can be understood and traced back to its underlying data, resulting in transparent, auditable, and trustworthy AI systems that can be confidently deployed in real-world environments.

Determinism: The Core Advantage

Most data solutions are designed around average performance, but edge systems demand guarantees under worst-case conditions where timing cannot be compromised. With ITTIA DB Lite AI, operations are engineered for determinism, delivering bounded latency with clearly defined worst-case execution times (WCET), eliminating long-tail latency spikes (P99.999 stability), and avoiding unpredictable behaviors such as garbage collection pauses or background compaction delays. Writes occur immediately and predictably, ensuring consistent system responsiveness even under heavy load or constrained resources. The result is a system where every operation is time-bounded and reliable, because in edge environments, determinism means every decision happens on time, every time.

Real-World Example: Motor Health Monitoring

Consider an embedded system monitoring an electric motor: it continuously captures current and vibration signals, stores them as ordered time-series data, generates features such as RMS, frequency components, and lag values, and feeds them into an on-device anomaly detection model to identify early signs of failure. When an issue is detected, the system can immediately trigger a local alert while selectively syncing a summary to the cloud.

With ITTIA DB Lite AI, data is never lost, even during power interruptions, features remain consistent over time, AI decisions are explainable and repeatable, and the entire system can operate fully offline when needed. This is why ITTIA DB Lite AI is purpose-built for Edge AI: it enables deterministic data pipelines from sensor to decision with bounded latency, provides flash-aware and reliable storage optimized for NOR, NAND, and SD behavior, ensures power-fail safety with fast recovery and no corruption, delivers MCU-optimized performance across platforms and supports complete Edge AI workloads including time-series data management, feature engineering, and real-time inference.

Final Thought

Edge AI is not just about deploying models, it’s about building data systems that never fail. In real-world devices, failures rarely come from the model itself; they come from unreliable data pipelines, inconsistent inputs, or unpredictable system behavior. AI models alone don’t create intelligent systems, data does, and that data must be captured, structured, and processed with absolute determinism. At the edge, where decisions must be made instantly and often without connectivity, data must be reliable, time-aligned, and fully explainable to ensure trust and safety. With ITTIA DB Lite AI, you go beyond simply storing data, you establish a deterministic, power-fail-safe, and verifiable data foundation that enables real-time, trustworthy Edge AI systems to operate consistently under all conditions.